How can we use AI to strengthen human connections?

I am both excited about the developments in AI and worried about its impact on us, humans.

I am excited about the new AI capabilities that comes out each week, the way it empowers us to do what we would have never dreamt of doing. But I am disappointed that AI seems to offer whatever is the next thing that is within easy grasp and has a wow factor, not what we really need. The idea that "I wanted AI to all my household chores so I can write poetry - yet we have been offered AI that can write poetry while I am still cleaning my kitchen and taking out the trash by myself."

I also worry about the impact [our adoption of] AI has on the way we work, communicate with one another, function as human beings. Will AI chatbots be our new BFFs? What happend to human connections

Recently I have researching examples of how AI can be used to strengthen human connections, instead of replacing them. Dating apps will claim they are a prime example: AI helps to better match their users with an ideal partner. But there are far more opportunities, I think. I like how 1001 Unicorns makes it their core mission not to replace human storytelling, but to create moments of bonding between parents and children as they create stories together based on the kids' imagined characters. (full disclosure - I have been a technical advisor on the project).

I will write more about my research finding in the coming months.

AI in the Arts - is it good or bad?

AI is capable of increasingly sophisticated levels of content creation. Instead of automating boring tasks (I am still waiting for a robot that unloads my dishwasher and takes out the trash), we get AI that can create paintings, music and film, even write entire books - the things that humans enjoy doing. Many artists are concerned about losing their livelihood and about the loss of culture as we knew it. And rightly so.

However, I can't help but wonder if collaborating with AI could spark new forms of creativity and art.

In addition to writing articles and books, I've been dabbling in songwriting for a while. Unfortunately, I've yet to convince a musician to perform my songs, and I have to admit that I lack any musical ability myself. Last month, I decided to experiment with an AI platform (udio.com) to set some of my lyrics to music. I was pleasantly surprised at how easy it was to create first a blues version and then a more upbeat version of my song (although it was frustratingly difficult to get exactly what I had in mind).

While it feels a little sad not to collaborate with human musicians, as a songwriter, it's incredibly valuable to be able to hear my lyrics brought to life in different styles within minutes. Whether it’s jazz, punk rock, or a simple acoustic arrangement, this technology gives me the opportunity to experiment with my songs in ways that wouldn't be possible otherwise.

Yes, some art forms may be threatened by the surge of AI-generated content, but I believe many artists will find themselves empowered by these new technological tools. They could speed up the creative process or even spark entirely new ideas.

The need for AI models for different cultural contexts

It is exciting that it becomes easier to finetune (or post-train) models with content specific to different languages and cultures. I had a brief chat with Yann LeCun last week on this topic in the margins of the Leaders in AI Summit.

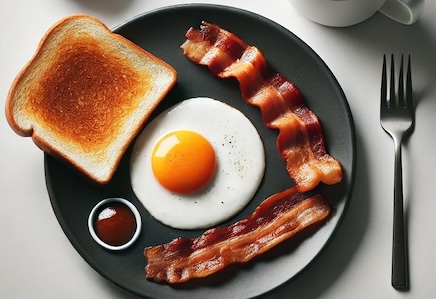

It is well known that the large LLM foundation models have been trained mainly on either English or Chinese language content. There is cultural bias too: ask DALL-E for a picture of breakfast and it will likely show you bacon and eggs - not idli or tortillas or muesli or other breakfasts that are common in 90% of the world.

It is well known that the large LLM foundation models have been trained mainly on either English or Chinese language content. There is cultural bias too: ask DALL-E for a picture of breakfast and it will likely show you bacon and eggs - not idli or tortillas or muesli or other breakfasts that are common in 90% of the world.

Fortunately, it is becoming easier for countries or communities to fine-tune or retrain open source foundation models (such as Meta's Llama3 or Alibaba's Qwen2) with local content. And it is important that they dedicate resources to do so. Good efforts have been made when it comes to languages such as Technology Innovation Institute's recently released Falcon Mamba 7B (Arabic), several models supporting French, Masakhane (Swahili, Yoruba) and KoGPT (Korean) are some examples. Multilingual models such as BLOOM and YAYI2 are good resources too.

We need models that represent local cultures. And it is not a one-time effort. As models keep evolving (and at some point may use very different architectures than current LLMs), it also takes a constant effort to produce new versions of these models for each cultural context. Countries need to establish capacities dedicated to do so. Therefore they need to be sure to put enabling conditions in place to train AI engineers.

We need models that represent local cultures. And it is not a one-time effort. As models keep evolving (and at some point may use very different architectures than current LLMs), it also takes a constant effort to produce new versions of these models for each cultural context. Countries need to establish capacities dedicated to do so. Therefore they need to be sure to put enabling conditions in place to train AI engineers.

The art of asking the right questions

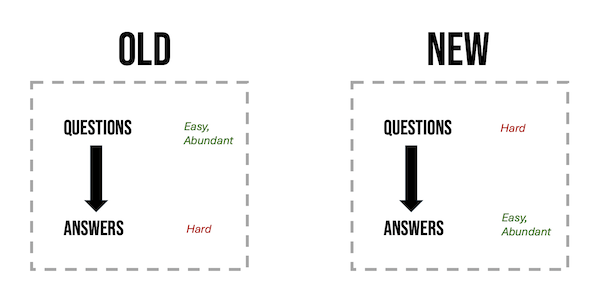

Traditional wisdom suggests that asking questions is easy, but providing answers is challenging. Parents enduring the never-ending "why" phase with their children can attest to that. However, with the rise of chatbots fueled by LLM's and RAG models accessing internal data, answers are now abundant and immediate. The real challenge lies in posing the right questions.

Some professions have been trained to ask good questions: doctors, lawyers, and investigators like Colombo, Miss Marple and inspector Clouseau. But most of us are terrible at it.

I remember when we first started offering advanced analytics and ML services, we told business leaders "We can get you amazing insights into anything we have data on. What would you like to know?" And no one had an answer. The upward reporting streams in most organizations have been, and often continue to be, supply driven: people report quarterly sales figures, expenditures and market data because that’s what's available. Now that the supply is unlimited, we need to change the information flows to be demand-driven.

The key in the next decade will be to ask the right questions. We all need to become more proficient in that skill.

How dangerous is AI?

I attended two talks by AI greats in the last few weeks: one by Geoffrey Hinton last month, and one by Yann LeCun at an event in New York yesterday.

They represent two different visions when it comes to the potential dangers of AI. Hinton sees us being at a crossroads where we need to tread very carefully lest we end up destroying humanity. LeCun on the other hand tells us that for all its hype, today's AI has less intelligence than a cat, and concerns about the power of AI are grossly exaggerated. So who's right? In fact I agree with both in some regard.

I agree with LeCun that the capabilities of AI are still very narrow and limited when compared to humans. But, like Hinton, I do worry. A lot.

What I worry about is not what AI can do, but rather how we collectively react to it. The way we enthusiastically adopt it in our lives, without much thought.

Even with the limited capabilities of AI today, and what will likely be possible in the next couple of years, I think we can easily end up ruining our society. I believe we underestimate the risk of flooding ourselves with mediocre content that can be created at near zero cost. We underestimate how AI, along with other factors, will necessitate a complete rethinking of our education system. The possibility to refine everything with AI will make us less accepting of the originality but also imperfection of human created content. Our protocols for communicating with one another will change, often opting for AI as an intermediary. And, as we become increasingly reliant on AI, it will affect our autonomy.

While I do not fear human extinction, I am concerned about the devaluation of our culture and of humans in general.

What does it mean to be Human?

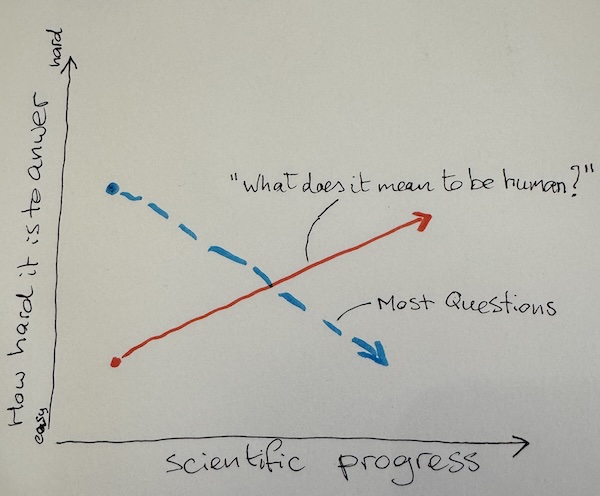

Most questions get easier over time. How the sun and the planets move around the earth (or rather us around them) was a mystery for some time, but telescopes and math helped come up with good models. It took some time to figure out the cause of bubonic plague, but medical science got the better of it and we managed to mostly eradicate the disease.

I have been exploring the question what it means to be human and I realized that it is a rare question in that it is getting harder to answer the more technological and scientific progress we make.

A popular answer used to be that "sophisticated use of language" was what distinguished us from plants and animals and Tamagotchis and robots. But the latest LLMs are almost as good, and sometimes better at using language(s) than humans.

CRISPR/Cas9 has the potential to confuse our ideas about the nature of humans even more. We like to think that each one of us is unique, but cloning may make that untrue.

Virtual Reality makes us spend time with, get attached to, perhaps even love entities that don't exist, at least not physically.

And AI effortlessly takes over many tasks that humans have always considered their particular contribution to the world, their source of fulfillment.

I cannot even imagine what people 50 or 100 years from now will think it means to be Human. Perhaps it will have become a moot question at that point.